Smart leaders know that knowledge management has a positive influence on workforce morale and performance. When employees have easy access to documents, expertise, and one another they waste less time looking for information, collaborate more efficiently to solve problems, and are better able to learn and grow in their roles. It seems obvious that this would boost the bottom line—but the connection between KM and business outcomes can be hard to prove.

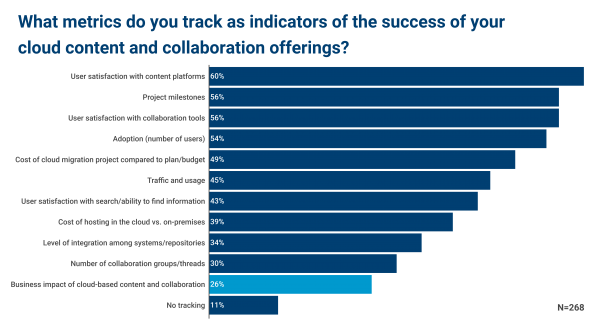

The sad truth is that relatively few organizations even attempt to measure the business value of KM and related initiatives. Consider APQC’s latest research on Enterprise Content and Collaboration in the Cloud as an example. A majority of survey respondents say their organizations track project milestones related moving content and collaboration to the cloud, the adoption rate for cloud platforms, and user satisfaction with new capabilities. But only 26 percent go beyond activity and process measures to examine the effect that cloud-based content and collaboration has on the business. (This number tracks with what we see in our overall KM benchmarks and metrics research, which I wrote about last year.)

Your Measurement Strategy May Be Putting You at Risk

Why do so few KM programs track their business impact? The short answer is because it’s hard. Knowledge and learning are intangibles, and it’s tricky to determine the extent to which knowing something influences the outcome of a particular transaction, decision, or project. So organizations fall back on measures that are easier to track, such as whether things are getting done, how many people are using the tools and approaches, and how much they like them.

The danger of disregarding outcome measures is twofold.

First, when you measure only activities and satisfaction, you may miss early-warning signs that KM is out of step with the business or fail to recognize urgent knowledge problems you need to tackle. Aligning KM activities and measures to things like revenue, cost savings, and quality improvements helps ensure that you’re focused on activities will actually make a difference. User satisfaction can be a reasonable proxy for value, but the two do not always track together.

Second, a dearth of impact measures makes it harder to justify KM’s existence when there’s a big shakeup. A new CEO, strategic shift, merger or acquisition, or restructuring can put even a long-standing KM program under the microscope. When making their case, KM leaders must be persuasive about why funding should stay in place—and why KM is more integral than other support services, assuming executives intend to cut something. Under these circumstances, KM is in a much better position if it has concrete data to convey return on investment.

Tips and Techniques to Measure KM Value

Despite the challenges involved, KM programs have many creative ways to demonstrate value.

APQC recommends a technique called value path measurement, which involves taking data on KM activities (e.g., from before vs. after a KM implementation or from teams with low vs. high usage) and correlating that with key performance indicators such as cycle time, quality rate, customer service statistics, or profitability. This can help you—for instance—determine whether teams that use lessons learned are more likely to complete projects on time and on budget, or whether manufacturing locations that participate in communities of practice have lower error rates.

Other popular techniques include:

- Quantifying success stories—for example, identifying situations where a community of practice or ask-the-expert service allowed employees to obtain add-on business from a key client or avoid a multi-million-dollar mistake and then rolling up those instances into an overall estimate of value

- Performing direct comparisons—for example, tracking the response time and first-contact resolution rate for customer service agents before and after the rollout of a new KM repository

- Asking users about impact—for example, surveying employees about the number of hours per month they save by using KM resources and then multiplying that number by each employee’s hourly rate to estimate cost savings

The best technique for you depends on why the KM program exists in the first place, the tools and approaches in place, and your organization’s measurement culture. Direct-comparison measures are extremely powerful, but much easier to achieve when working with transactional roles vs. more strategic or creative ones where productivity itself is harder to measure. Some KM leaders use survey data on time savings to great effect, whereas other executives see these calculations as “fluff” since they are based on perceived (rather than observed) impact.

In addition to choosing a good measurement strategy, think carefully about the right business KPIs to target. If the business strategy is laser-focused on innovation, showing that KM improves quality may not be sufficiently compelling evidence of value to garner sustained support.

The winners of APQC’s 2019 Excellence in Knowledge Management award are good role models for pinpointing and measuring what matters to the business. For instance, Mercer’s KM program tracks its contribution to a range of consulting objectives including revenue contribution from products sold, operational efficiency and time saved, colleague engagement, compliance, and risk mitigation. Avoiding risk is a major business concern at Bupa Health Insurance, so its Knowledge Solutions team correlates KM usage against how frequently staff give misinformation to customers as a way to show KM’s impact on risk minimization. At Shell, the KM team uses quantified success stories to calculate cost savings from KM. Even using a conservative formula, the team has documented savings nearing $500 million.

If you want to learn more about best practices in KM measurement, I recommend the following articles published this year:

- Measure What Matters to the Business in KM

- Promote Knowledge Management with Success Stories and Data

Even more information can be found in APQC’s Measuring Knowledge Management Initiatives collection.